Mobile apps now require AI for competitive survival. Learn how to build production-ready AI features that deliver measurable business results in 2026.

Highlights:

- AI in the mobile apps market reached $21.23 billion in 2024, and is projected to reach $354 billion by 2034.

- Apps mentioning AI were downloaded 17 billion times in 2024 (13% of all downloads).

- AI chatbot apps became the fastest-growing app subgenre, showing 112% year-over-year growth.

AI has moved from an optional feature to core infrastructure in mobile app development.

Users who experience AI-powered personalization in one app now expect similar intelligence everywhere. Apps without AI capabilities face declining engagement and rising churn rates.

Yet building AI-powered mobile apps requires more than adding a chatbot or recommendation engine. Companies need strategic approaches that connect AI capabilities to specific business outcomes while managing the architectural complexity that comes with production AI systems.

At Mind Studios, we specialize in building AI-powered mobile applications that deliver measurable business impact across logistics, real estate, fintech, and healthcare. Contact our team to discuss your AI mobile app project.

Why AI became essential for mobile app success

The business case for AI in mobile apps has evolved beyond competitive advantage to a survival necessity.

Companies implementing AI capabilities experience three measurable impacts:

1. Revenue growth through better user engagement;

2. Cost reduction via automation;

3. Retention improvements from personalization.

How AI drives revenue growth

AI-powered mobile apps generate revenue through multiple mechanisms:

- Personalization engines increase conversion rates by showing users exactly what they need when they need it.

- Recommendation systems extend session duration and basket sizes.

- Predictive analytics identify high-value users for targeted offers.

Cost reduction through intelligent automation

AI reduces operational costs by automating tasks that previously required human intervention.

- Customer support chatbots handle 70–80% of routine inquiries without agent involvement.

- Document processing systems extract data from images and PDFs instantly.

- Fraud detection systems flag suspicious transactions in real-time.

User retention through intelligent experiences

AI keeps users engaged by creating experiences that improve with every interaction. Apps learn user preferences, predict needs, and adapt interfaces dynamically. This creates switching costs. Plus, users invested in personalized experiences rarely abandon apps that know them well.

What companies lose without AI

Organizations delaying AI implementation face three compounding losses.

1. They lose market share to competitors offering superior experiences.

2. They lose talent to companies building with modern technology.

3. They lose time, as catching up requires not just implementing AI but changing organizational culture.

Furthermore, the window for strategic AI adoption narrows as user expectations rise. Apps without AI capabilities appear outdated. Users compare every experience to best-in-class AI implementations from leaders like Netflix, Spotify, and Uber.

We've watched market leaders pull ahead while competitors debate AI strategy. The companies succeeding with AI aren't the ones with the biggest budgets. They're the ones who connected AI capabilities directly to business outcomes. They measured impact in revenue, costs, and retention rather than technical metrics. That focus on business value determines who wins.

— explains Dmytro Dobrytskyi, CEO at Mind Studios.

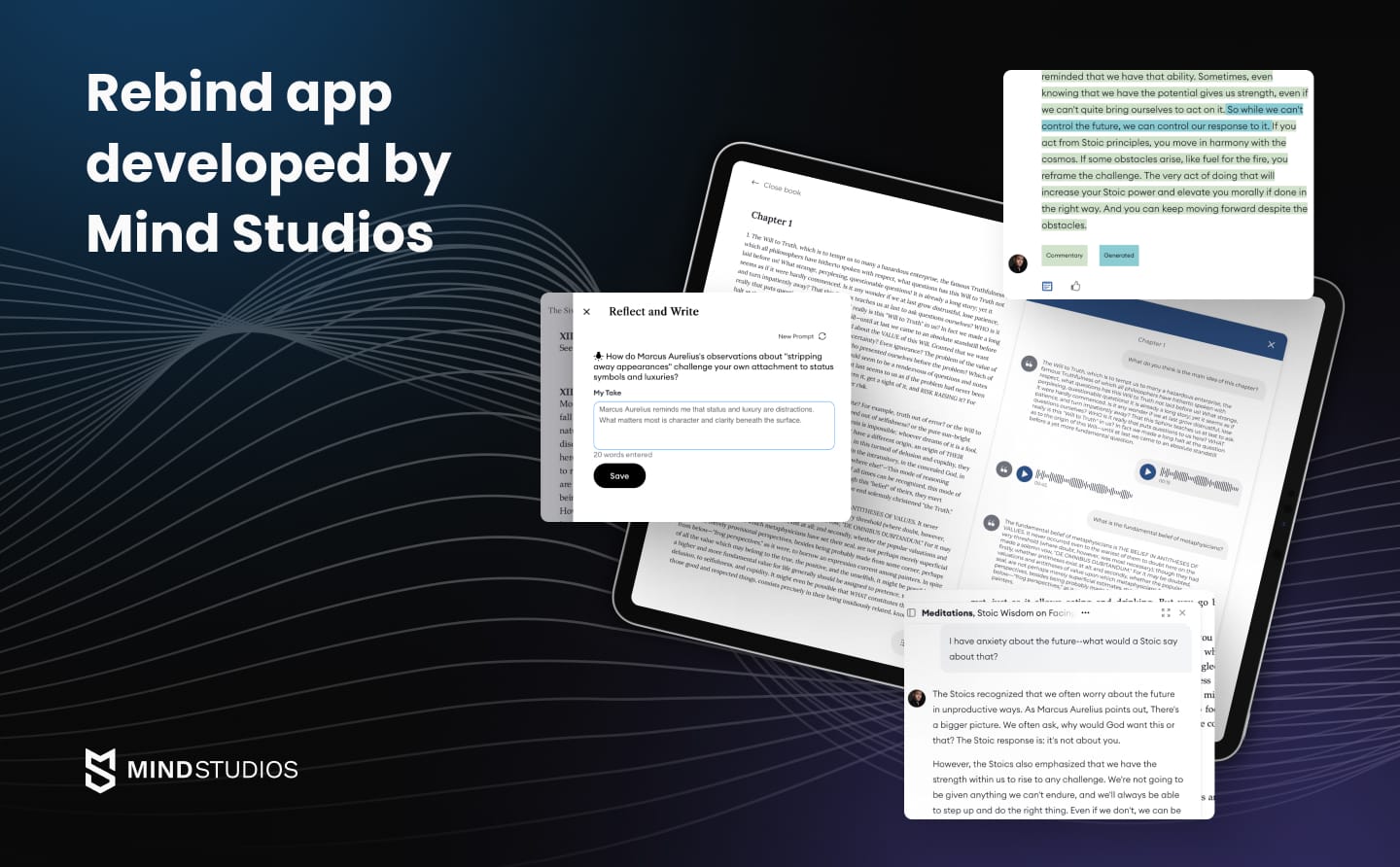

Mind Studios' insight: When Rebind approached us to transform classic literature reading, they didn't want a simple chatbot. They needed AI that could blend hundreds of hours of expert commentary with dynamic conversations while maintaining transparency about what's human versus AI-generated. This hybrid approach, combining indexed expert interviews with AI flexibility, created an experience that earned recognition as one of Fast Company's Most Innovative Companies of 2025.

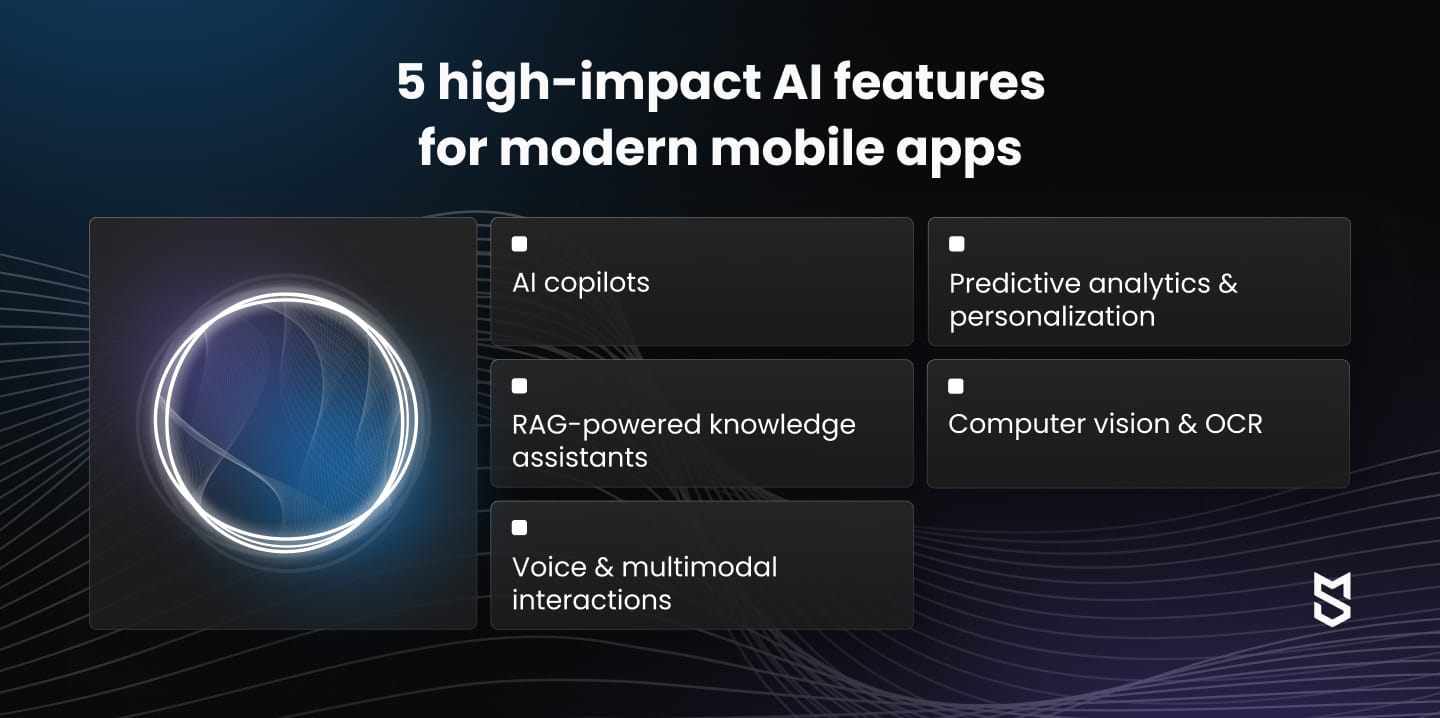

The most valuable AI capabilities for mobile apps in 2026

Mobile app AI has matured beyond simple recommendation engines and chatbots.

The capabilities driving business value in 2026 combine multiple AI technologies to solve specific user problems.

AI copilots inside mobile apps

AI copilots represent the next evolution beyond chatbots.

Rather than answering isolated questions, copilots actively help users complete complex workflows. They suggest next actions, automate repetitive tasks, and learn user preferences to streamline future interactions.

Business benefit: Copilots reduce task completion time by 40–60% while decreasing errors from manual data entry. Users accomplish more in less time, leading to higher app engagement and reduced churn.

RAG-powered knowledge assistants

Retrieval-Augmented Generation combines large language models with company-specific knowledge bases. Mobile apps with RAG can answer questions using your documentation, policies, and historical data rather than generic information.

Business benefit: RAG systems provide accurate, contextual answers without extensive custom training. They reduce support costs by up to 70% while improving first-contact resolution rates from 40% to 85%.

Predictive analytics and personalization

Modern predictive AI anticipates user needs before explicit requests. Mobile apps analyze usage patterns, environmental context, and historical behavior to predict what users will need and when they'll need it.

Business benefit: Predictive personalization increases engagement by 25–40% and revenue per user by up to 35%. Users spend more time in apps that anticipate needs rather than forcing them to search.

Computer vision for scanning, OCR, and automated data entry

Computer vision eliminates manual data entry through intelligent scanning and recognition. Mobile apps with vision AI extract information from documents, identify objects, and verify conditions instantly.

Business benefit: Vision AI reduces data entry time by 90% while decreasing errors by 95%. Users complete tasks in seconds rather than minutes, dramatically improving workflow efficiency.

Voice and multimodal interactions

Voice AI combined with visual understanding creates natural, hands-free mobile experiences. Users speak commands while the app understands visual context, creating seamless interactions for situations where typing isn't practical.

Business benefit: Multimodal interactions increase task completion rates in mobile contexts by 60–80%. Users working with their hands or on the move complete workflows that would be impossible with typing alone.

Mind Studios' recommendation: Start with capabilities that solve your users' most time-consuming or error-prone tasks. AI that saves users 10 minutes daily creates more value than impressive features used occasionally. Match AI capabilities to specific workflows rather than adding generic chatbots.

Not sure which AI capabilities fit your mobile app? Our team can assess your workflows and identify high-impact opportunities. Contact us for a consultation.

Architecture behind modern AI-driven mobile apps

Building production-ready AI mobile apps requires architectural decisions that extend far beyond API integrations.

The infrastructure choices you make determine scalability, accuracy, cost efficiency, and user experience quality. Understanding these architectural components helps you build systems that perform reliably under real-world conditions.

What's required beyond "just an API"

Three factors determine AI mobile app success: scalability, accuracy, and cost efficiency.

Architecture choices directly impact all three. Apps serving 1,000 users need a different infrastructure than apps serving 1 million. Without proper architecture, AI apps provide impressive demos but face unsustainable costs and unreliable performance at scale.

| Component | Purpose | Business impact |

|---|---|---|

| LLM + RAG + Vector DB | Combines language models with company-specific knowledge retrieval. | Provides accurate, contextual answers grounded in your data rather than generic responses. |

| Backend logic + secure data pipelines | Manages data flow, implements business rules, ensures security compliance. | Protects sensitive information while enabling real-time AI features. |

| On-device AI | Processes certain AI tasks locally on the mobile device. | Reduces latency, works offline, protects privacy for sensitive operations. |

| Caching and optimization | Stores frequently requested AI responses and preprocesses common queries. | Cuts API costs by 60–80% while improving response times. |

| Monitoring and observability | Tracks AI performance, cost, accuracy, and user satisfaction. | Identifies issues before they impact users and optimizes system performance. |

LLM + RAG + vector database integration

Modern AI mobile apps connect language models to proprietary knowledge through Retrieval-Augmented Generation. This architecture prevents hallucinations while keeping responses current. When users ask questions, the system searches vector databases for relevant context, then feeds that information to the LLM for accurate, grounded responses.

How it works: Vector databases like Pinecone, Weaviate, or Qdrant store embeddings of your knowledge base. Document processing pipelines chunk, embed, and index content regularly. Query routers determine which knowledge bases to search for each question.

In practice: A logistics app's RAG system searches shipping regulations, carrier capabilities, and historical delivery data before answering routing questions. Updates to policies or rates automatically reflect in future responses without retraining models.

Backend logic and secure data pipelines

AI mobile apps require sophisticated backends that manage data flow between devices, AI services, databases, and third-party systems while enforcing security policies and maintaining sub-2-second response times.

Security requirements: Backends must encrypt data in transit and at rest, implement role-based access controls, audit AI interactions for compliance, and rate-limit requests to prevent abuse. HIPAA-compliant healthcare apps and financial applications face additional regulatory requirements around AI usage and data handling.

In practice: A real estate app's backend validates user permissions before sharing property data with AI systems. It anonymizes sensitive information, routes queries to appropriate AI models, assembles responses from multiple sources, and logs interactions for compliance. All while maintaining response times under 2 seconds.

On-device AI capabilities

Processing AI tasks directly on mobile devices reduces latency, enables offline functionality, and protects privacy. Modern smartphones have dedicated neural processing units capable of running optimized AI models locally.

Trade-offs: On-device models are smaller and less capable than cloud-based systems. They work best for focused tasks with clear parameters. Hybrid approaches process simple queries locally while routing complex requests to cloud AI.

In practice: A healthcare app processes symptom checking on-device to protect patient privacy. A logistics app recognizes package labels offline in warehouses without connectivity. A real estate app analyzes property photos locally before sending results to backend systems.

Why architecture defines success

- Scalability depends on distributed systems with proper caching, load balancing, and async processing.

- Accuracy comes from RAG systems grounded in current data and feedback loops that improve responses.

- Cost efficiency requires intelligent caching, prompt optimization, and model selection.

The difference between smart and naive AI architectures is often 10x in operating costs. Apps making thousands of AI requests daily without optimization face unsustainable expenses.

Mind Studios' insight: We've seen companies spend six figures on AI features that users love, but costs make it unprofitable. The solution isn't cutting capabilities. It's building architectures that cache common requests, optimize prompts, use appropriate models for each task, and process batch operations efficiently. These architectural decisions reduce costs by 70–80% without impacting user experience.

How Mind Studios builds AI-powered mobile apps

Building production-ready AI mobile apps requires more than technical implementation. Success depends on connecting AI capabilities to business outcomes, ensuring accuracy and safety, and creating experiences that users trust.

Our process addresses these challenges systematically.

We've developed a five-stage process that delivers AI features users love while managing the complexity inherent in production AI systems.

Step 1: AI discovery and business validation

We start by identifying which business problems AI can solve profitably. Not every problem needs AI, and not every AI application creates business value.

Discovery focuses on three questions:

1. What specific user problems does AI solve?

2. What measurable outcomes define success?

3. What data and infrastructure enable the solution?

This stage involves user research to understand actual pain points, data audits to assess readiness, and ROI modeling to validate business cases. We prototype quickly to test assumptions before committing resources.

For example, during discovery, we identified that traditional e-readers offered linear, isolated experiences, but Rebind's users wanted guided, interactive reading with expert insights. Rather than building a generic AI chatbot, we validated that readers would engage with a conversation-driven experience combining video introductions, highlighted passages, journaling tools, and dynamic AI discussions. This focused discovery shaped every technical decision.

Step 2: Data preparation and model strategy

AI quality depends on data quality. We audit existing data for completeness, consistency, and accessibility. Missing or messy data gets cleaned and structured before AI implementation begins.

Model strategy determines whether to use off-the-shelf APIs, fine-tune existing models, or build custom solutions. We evaluate based on required accuracy, response time, data sensitivity, and cost constraints.

Mind Studios’ recommendation: Agentic AI systems break complex workflows into steps, using multiple AI models and tools to accomplish tasks. We implement agents when users face multi-step processes that require tool usage, external data gathering, or iterative refinement.

Step 3: UX for AI-driven experiences

AI features fail when users don't trust them or understand how they work. We design AI interactions that feel natural, provide transparency, and maintain user control.

Effective AI UX includes a clear indication when AI generates content, confidence scores for predictions, options to modify or override AI suggestions, explanations for AI recommendations, and graceful handling of failures.

Mind Studios’ recommendation: Show AI's reasoning process when possible. Let users edit AI outputs before committing. Provide fallbacks when AI confidence is low. Never hide important information behind AI layers users might miss.

Step 4: Development, QA, safety layers, and monitoring

Implementation goes beyond connecting APIs. We build systems that handle edge cases, fail gracefully, protect against misuse, and maintain quality over time.

Safety layers include:

- Input validation to prevent prompt injection attacks;

- Output filtering to block inappropriate or sensitive content;

- Rate limiting to control costs and prevent abuse;

- Fallback systems when AI fails or provides low-confidence answers;

- Human review for high-stakes decisions.

Mind Studios’ insight: Traditional QA approaches don't work for AI systems. We test with adversarial inputs, monitor real-world accuracy rates, implement A/B testing for AI features, and collect user feedback on AI quality.

Step 5: Launch and iteration

AI mobile apps improve through continuous iteration based on real user data. We launch with monitoring systems that track accuracy, cost, latency, and user satisfaction. These metrics inform ongoing refinements.

Post-launch optimization focuses on improving prompts based on usage patterns, updating RAG knowledge bases with new information, adjusting model parameters for better accuracy, and reducing costs through caching and optimization.

Mind Studios' approach: We prioritize enterprise readiness and safety in every AI implementation. Our clients need AI features that work reliably at scale, handle sensitive data properly, and maintain accuracy over time. These requirements shape our architectural decisions, testing processes, and monitoring systems from day one.

Ready to build AI mobile app features that work in production, not just demos?

Contact our team to discuss your requirements, timeline, and how we ensure AI delivers measurable business value from launch.

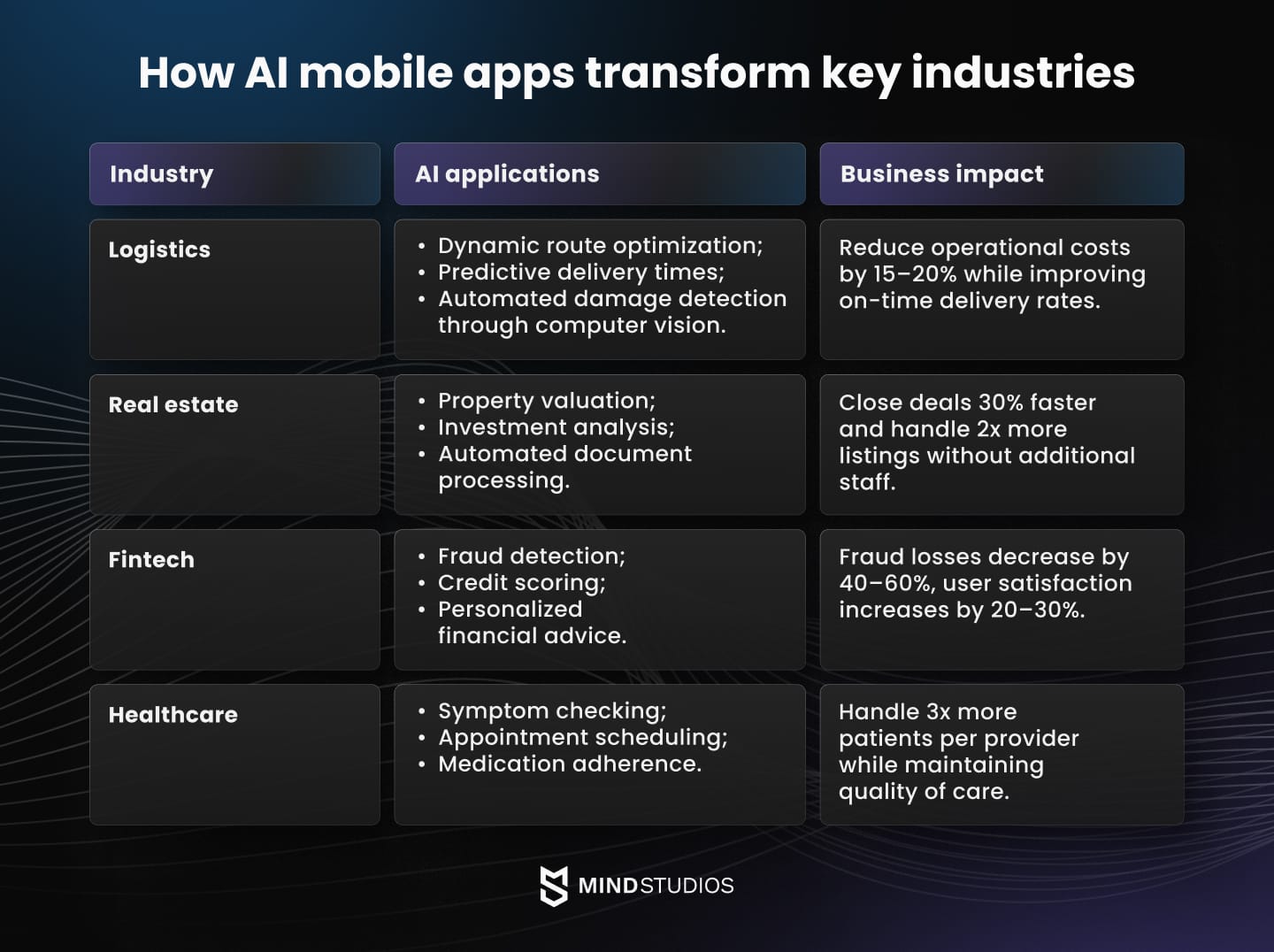

AI mobile solutions we deliver across key industries

AI capabilities create value differently across industries. Understanding these industry-specific applications helps you identify which AI features will drive the most impact for your mobile app.

We've implemented these solutions across our core markets.

Logistics

Logistics operations face constant pressure to reduce costs while improving service quality. AI mobile apps address these challenges through intelligent automation and predictive capabilities.

- AI routing assistants analyze traffic patterns, weather conditions, delivery windows, and vehicle capacity to optimize routes dynamically. Drivers receive updated routes as conditions change, reducing fuel costs by up to 25% and improving on-time delivery rates to 95%+.

- Scanning and document processing eliminate manual data entry through computer vision. Drivers photograph packages, bills of lading, and delivery confirmations. AI extracts all relevant data instantly, updating systems in real-time and reducing administrative time by 80%.

- Fleet management copilots predict maintenance needs, identify inefficient driving patterns, and recommend optimal vehicle assignments.

Real estate

Real estate professionals manage hundreds of properties, clients, and transactions simultaneously. AI mobile apps help agents and property managers scale their operations without additional staff.

- Property analysis tools evaluate investment opportunities by analyzing comparable sales, rental rates, demographic trends, and market conditions. Investors receive detailed ROI projections with confidence intervals, identifying profitable properties 40% faster than manual analysis.

- Document automation extracts key information from contracts, leases, inspection reports, and financial statements. AI populates CRM fields, flags concerning clauses, and generates summaries for quick review. This reduces contract review time from hours to minutes.

- AI-powered search understands natural language property requirements and matches listings to client preferences. Rather than filtering by bedrooms and price, users describe their ideal property in plain language. AI interprets preferences, priorities, and deal-breakers to surface relevant listings agents might have missed.

Fintech

Financial applications leverage AI for security, personalization, and regulatory compliance. These capabilities differentiate modern fintech apps from traditional banking interfaces.

- Fraud detection analyzes transaction patterns in real-time, identifying suspicious activity before money leaves accounts. AI models detect fraud with 95%+ accuracy while keeping false positive rates below 1%, protecting users without frustrating legitimate transactions.

- Smart onboarding guides users through account setup with AI assistants that answer questions, verify documents, and personalize recommendations based on financial goals.

- AI compliance tools monitor transactions for regulatory requirements, automatically flagging issues for review. For businesses operating internationally, AI handles currency conversion monitoring, sanctions screening, and reporting requirements across multiple jurisdictions.

Marketplaces and consumer apps

Consumer-facing apps compete on engagement and personalization. AI features that understand user preferences and predict needs create sticky experiences users rely on daily.

- Personalization engines adapt content, product recommendations, and interface layouts to individual preferences. These systems analyze browsing patterns, purchase history, and implicit signals to predict what users want. Effective personalization increases conversion rates by up to 35% and average order values by up to 25%.

- Smart recommendations go beyond "customers who bought X also bought Y." Modern recommendation AI considers context, timing, user goals, and broader behavioral patterns. A fitness app recommends workouts based on recovery status, schedule availability, and goal progress rather than just past preferences.

Wrapping up

AI is no longer optional for mobile apps that want to remain competitive since users expect intelligent experiences that learn preferences and predict needs.

Success requires connecting AI capabilities to specific business outcomes, building architectures that scale economically, and creating experiences users trust. The difference between impressive demos and profitable products comes down to systematic implementation focused on real user problems.

Mind Studios brings proven expertise in developing AI-powered mobile applications across logistics, real estate, healthcare, and other sectors. Our approach ensures AI features deliver value from launch while maintaining the quality, security, and performance standards users expect.

Ready to build an AI-powered mobile app that drives business results? Contact Mind Studios today to discuss your project requirements.