Serverless architecture promises faster launches and lower costs, but many SaaS companies discover vendor lock-in and unpredictable expenses only after their systems scale. This guide shows you how to evaluate serverless decisions before they become expensive constraints.

Highlights:

- The global serverless market reached $24.51 billion in 2024, projecting $52.13 billion by 2030.

- Over 70% of AWS customers use serverless, with adoption growing across major providers.

- Vendor lock-in and cost unpredictability remain the top concerns preventing broader enterprise adoption.

Teams often choose serverless to move fast and reduce operational complexity. The approach works beautifully at first: automatic scaling, no servers to manage, and pay-per-execution pricing sound ideal. The problems surface later, typically 18–36 months into production, when migration becomes prohibitively expensive.

Serverless delivers genuine value when matched to appropriate workloads. Vendor dependency deepens gradually as your architecture integrates more cloud-native services. Budget predictability deteriorates as usage patterns evolve. Architectural flexibility erodes as dependencies multiply.

While others discuss serverless in abstract technical terms, Mind Studios helps businesses make architecture decisions that survive growth. Get in touch with our team to validate your architecture choices before costs spiral.

This guide helps you decide when serverless accelerates your business and when it creates long-term constraints you cannot afford.

What serverless architecture really means (beyond marketing)

The term "serverless" is misleading. Servers still exist, you simply stop managing them. Understanding how serverless architecture works reveals both its power and its limitations.

How serverless actually works

At its core, serverless operates on an event-driven execution model. Your code runs only when triggered by specific events: HTTP requests, database changes, file uploads, scheduled tasks, or messages in a queue. Between executions, nothing runs, nothing idles, and nothing costs money.

This model consists of two main components:

- Function-as-a-Service (FaaS): Platforms like AWS Lambda, Google Cloud Functions, and Azure Functions execute your custom code in response to events.

- Backend-as-a-Service (BaaS): Provides ready-made services for common needs like managed databases, authentication systems, file storage, messaging queues, and API gateways.

An application designed with serverless architecture principles typically combines both. Your functions contain business logic, while BaaS services handle infrastructure concerns.

For example, a user uploads an image (event) → triggers a function → resizes the image → stores it in managed storage → updates a managed database → sends a notification through a messaging service.

Each component scales independently. Heavy image processing spawns more function instances automatically. Light database operations require fewer resources. This granular scaling is serverless's primary technical advantage.

Why seamless integration creates vendor lock-in

Serverless is an ecosystem decision, not a single technology.

Your Lambda functions might depend on DynamoDB for data, EventBridge for orchestration, S3 for storage, API Gateway for HTTP routing, and Cognito for authentication.

This dependency structure reveals a critical principle: serverless optimizes for velocity, not portability. The seamless integration within one ecosystem makes cross-provider migration expensive.

State management also works differently. Traditional applications maintain state in memory between requests. Serverless functions must externalize all state because they terminate after execution: session data goes to managed caches, transaction state moves to databases, and workflow coordination requires orchestration services. This stateless design enables scaling but adds complexity and cost.

The technical limits that eliminate serverless for many workloads

Cold starts add 450–3200ms latency for standard functions, or 1800–5000ms with VPC networking. For latency-sensitive applications, this unpredictability creates problems.

FYI: "Cold start" is a serverless architecture term that refers to the latency/delay that occurs when a function is invoked after being idle.

Resource limits matter:

- AWS Lambda: 15 minutes execution time, 10GB memory maximum

- Azure Functions: 230 seconds for HTTP requests

These boundaries eliminate serverless for long-running processes, batch jobs, or memory-intensive operations.

The billing model creates unpredictability. You pay for request count, execution duration, and memory allocation. A poorly optimized function running millions of times daily generates unexpected bills. Meanwhile, the traditional infrastructure would show performance degradation instead of budget spikes.

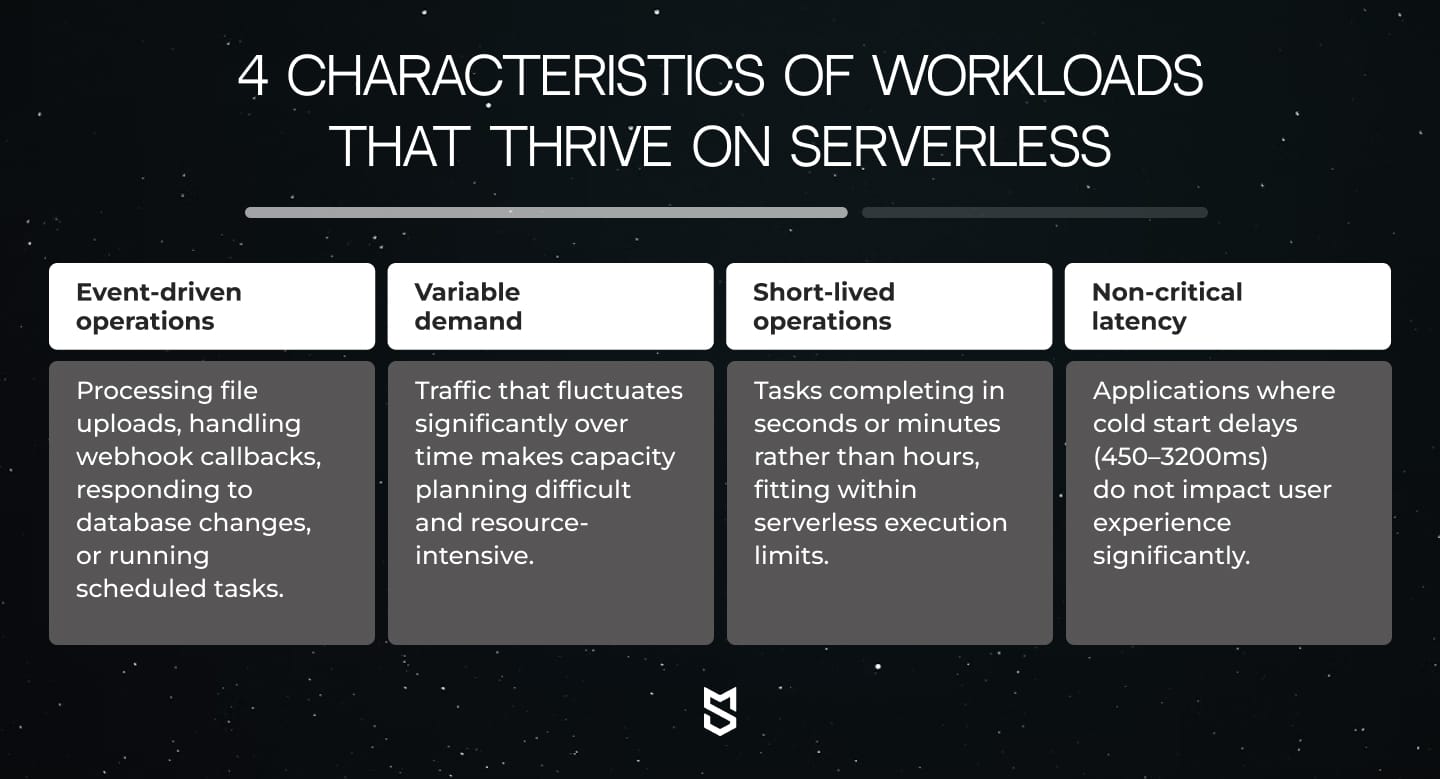

Serverless works exceptionally well when your workload aligns with its strengths: event-driven, variable load, stateless operations, and short execution times. When your needs diverge from this pattern, the gaps between marketing promises and operational reality become expensive.

Why serverless adoption accelerated despite long-term constraints

The path from physical servers to serverless architecture represents a fundamental shift in how businesses approach infrastructure responsibility.

Understanding this evolution explains why serverless adoption reached over 70% among AWS users in 2024, and why many companies later regret that decision.

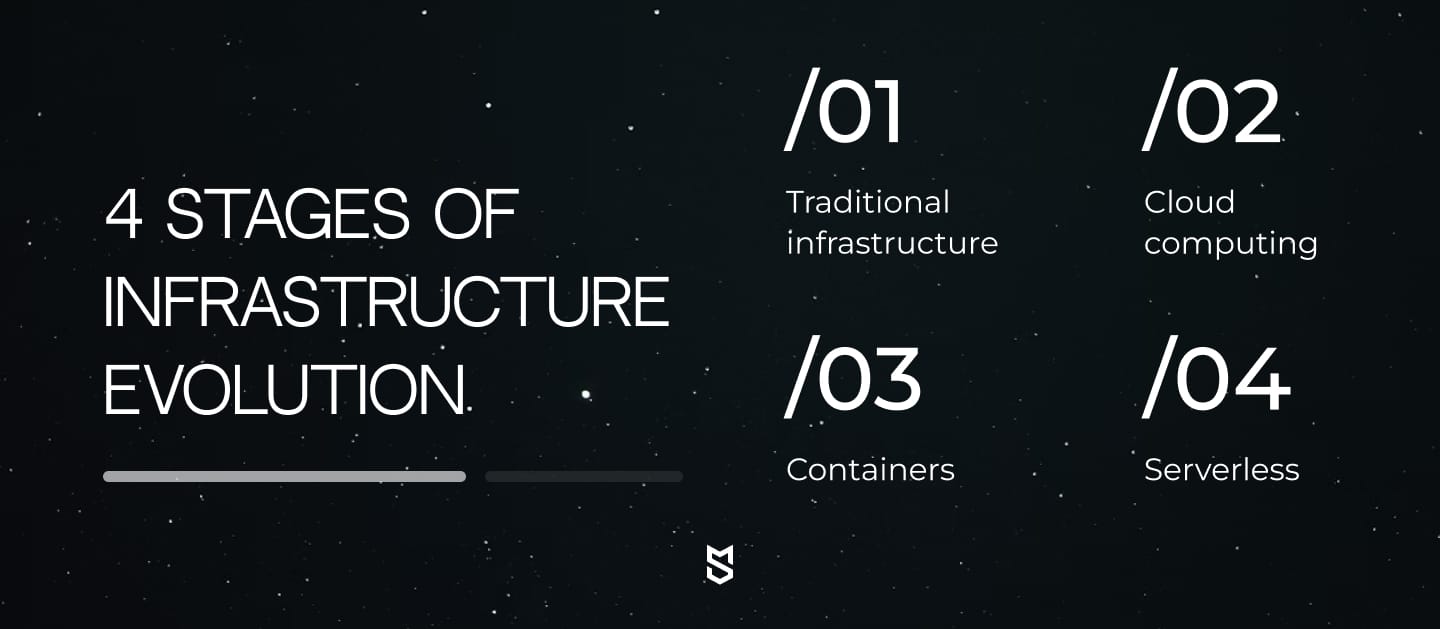

The evolution happened in four stages

Stage 1: Traditional infrastructure: full control, full burden

Teams procured physical servers, managed operating systems, handled security patches, and scaled resources manually. This worked for predictable applications but created bottlenecks as software delivery accelerated.

Stage 2: Cloud computing: faster provisioning, same management overhead

Cloud computing introduced the first layer of abstraction with virtual machines. Companies could provision servers in minutes rather than weeks, paying only for resources consumed. Yet teams still managed operating systems, configured load balancers, and handled scaling manually.

Stage 3: Containers: automated scaling, retained infrastructure ownership

Containers brought the next abstraction layer, packaging applications with dependencies for consistent deployment across environments. Kubernetes and similar orchestration tools automated much of the scaling complexity, but teams still owned the infrastructure layer, monitoring cluster health, managing nodes, and planning capacity.

Stage 4: Serverless: complete abstraction, complete vendor dependency

Serverless architecture’s core principles took abstraction to its logical conclusion. Teams write functions that execute in response to events, with cloud providers handling everything else: scaling, availability, security patches, and runtime management. No servers to configure, no capacity planning, no infrastructure to monitor.

FYI: Abstraction = hiding complexity behind a simpler interface. Each "layer of abstraction" means someone else handles more of the technical details so you can focus on your application.

Why abstraction accelerated adoption

Development teams could ship features faster without infrastructure concerns, and companies only paid for actual execution time rather than idle capacity. For startups racing to market, this value proposition proved irresistible.

However, every abstraction layer transfers control from your team to the vendor. With traditional servers, you control the stack. With serverless, you control only your application code, and everything else belongs to AWS, Azure, or Google Cloud.

Teams celebrate not managing servers until they need to debug performance issues across distributed functions, optimize costs across hundreds of microservices, or migrate to a different provider.

Abstraction provides genuine value, but your product must be able to afford the long-term cost of giving up control.

Mind Studios' insight: The abstraction layers that seem irrelevant during MVP development become critical constraints during scale. We recommend documenting which serverless services are "core" versus "peripheral" from day one. This 30-minute exercise during architecture planning can save 6+ months of refactoring when you need to optimize costs or migrate components.

We assess whether serverless constraints align with your business model before lock-in becomes expensive. Contact our team for an architecture review.

When serverless actually works well

Serverless architecture delivers measurable value in specific scenarios where its characteristics align with business needs. Understanding when to use a serverless architecture requires evaluating your product stage, traffic patterns, and strategic priorities.

When speed to market justifies future technical debt

MVPs and early-stage products represent serverless's strongest use case. When speed to market outweighs long-term concerns, serverless removes infrastructure as a bottleneck. A three-person startup can launch a production application in weeks without hiring DevOps engineers or negotiating with cloud sales teams.

The numbers support this approach. Companies using serverless for initial development can reduce time-to-market by several months compared to the traditional infrastructure setup. For startups where survival depends on validating product-market fit quickly, this acceleration justifies accepting future technical debt.

When clients come to us wanting to launch an MVP in 8–12 weeks, we often recognize that serverless architecture fits their timeline. The conversation shifts when they describe plans for predictable growth or enterprise sales. Then we explain why investing an extra month in proper infrastructure saves six months of refactoring later.

— says Dmytro Dobrytskyi, CEO of Mind Studios.

Traffic spikes that would crush traditional infrastructure

Spiky or unpredictable workloads show serverless's technical advantages most clearly. Applications that experience massive traffic variations (like concert ticket sales, holiday shopping, or content going viral) benefit from automatic scaling without pre-provisioning capacity for peak load.

Netflix exemplifies this pattern. Their video encoding pipeline uses serverless functions to handle spikes when new content launches, automatically scaling from baseline to thousands of concurrent executions within seconds. When demand drops, costs drop proportionally.

Mind Studios' recommendation: If your workload meets 3 out of 4 characteristics above, serverless likely works well. If it only meets 1–2, consider a hybrid architecture instead. We've found that forcing serverless workloads onto workloads that don't naturally align with these patterns typically costs 2–3x more than traditional infrastructure within 18 months.

Where serverless works without risking your competitive advantage

Internal tools and non-core systems present another strong fit. Companies successfully use serverless for employee dashboards, automated report generation, data transformation pipelines, and background processing tasks. These applications tolerate higher latency, run intermittently, and do not represent core business IP.

- Slack demonstrates this approach with its bot and integration marketplace. Rather than forcing developers to manage infrastructure for simple bots, Slack enables serverless backends that scale automatically when messages arrive. Developers focus on bot logic while Slack handles execution infrastructure.

- The Coca-Cola Company achieved 40% operational cost reduction after migrating parts of their architecture to serverless, while also cutting IT ticket volume by 80%. Their success came from strategic selection: identifying workloads where serverless strengths aligned with business needs.

What success looks like:

Success looks different depending on your goals:

- For MVPs: launching quickly and gathering user feedback;

- For spiky workloads: handling peak load without provisioning excess capacity;

- For internal tools: reducing operational overhead for non-critical systems.

What these scenarios share is acceptance that the pros of serverless architecture outweigh concerns about lock-in, cost unpredictability, or migration complexity. When your business can absorb those trade-offs, serverless delivers real value.

The critical mistake is assuming these benefits transfer to all scenarios. The same characteristics that make serverless excellent for MVPs create problems for mission-critical systems with predictable high load. Understanding where serverless works well requires an honest assessment of your product's stage, growth trajectory, and strategic priorities.

Mind Studios’ recommendation: Start by mapping your application's actual execution patterns over 30 days before committing to serverless. We've seen companies migrate to serverless, assuming "variable load" when 80% of their traffic was a predictable baseline, making traditional infrastructure 40% cheaper. Track cold start frequency, average execution duration, and invocation patterns to make data-driven decisions.

The vendor lock-in reality and hidden long-term costs

Vendor lock-in with serverless architecture is structural and inevitable. The tighter your integration with cloud-native services, the more expensive the migration becomes.

How vendor dependency accumulates without you noticing

Tight coupling to cloud-native services happens gradually. You start with AWS Lambda for computation, add DynamoDB for data, integrate EventBridge for orchestration, implement Cognito for authentication, and use Step Functions for workflows.

Each service works seamlessly with the others within AWS's ecosystem. Six months later, you realize your architecture assumes AWS-specific behaviors that have no equivalent elsewhere.

Why migration costs often exceed original development budgets

The cons of serverless architecture become apparent during migration attempts:

- Lambda functions reference AWS SDK calls specific to their services;

- DynamoDB queries use proprietary APIs without direct equivalents in Azure CosmosDB or Google Firestore;

- EventBridge rules translate awkwardly to other event systems;

- Authentication flows built on Cognito require complete reimplementation.

Migration complexity scales with system age and integration depth. A startup with three Lambda functions can migrate in weeks. An enterprise with 500+ functions, dozens of data stores, and complex orchestration pipelines faces months of work. Some companies discover migration costs exceeding the original development budget.

Exit costs extend beyond engineering effort. Data transfer fees (egress charges for moving data out of a cloud provider) can reach hundreds of thousands of dollars for companies with terabyte-scale datasets. These fees make migration financially prohibitive even when technically feasible.

When your monthly bill spikes 300% overnight

Cost opacity at scale represents another hidden expense. Serverless's "pay for what you use" model sounds appealing until you realize you're paying for hundreds of different things: function invocations, execution duration, memory allocation, data transfer between services, API gateway requests, database operations, and storage requests.

A minor code change triggered excessive function calls, generating millions of billable requests before anyone noticed. With traditional infrastructure, the same spike would have caused performance degradation, not a budget crisis.

Mind Studios’ recommendation: Evaluate vendor lock-in as a business risk, not a technical problem. If your product represents core IP, differentiated value, or long-term competitive advantage, design architecture that preserves optionality. If speed to market matters more than future flexibility, accept lock-in deliberately and plan accordingly.

The talent trap: hiring and training in vendor-specific ecosystems

Organizational dependency creates less obvious but equally significant costs. Your team develops expertise in AWS-specific services. Hiring becomes harder because developers with experience in your exact stack are rare. Training new engineers takes longer because they must learn not just your application but AWS's entire ecosystem.

Best practices for serverless architecture often accelerate this dependency. Cloud providers encourage using their services because integration is simpler. The path of least resistance leads deeper into vendor-specific territory.

Treating lock-in as a business decision, not an accident

Vendor lock-in becomes a problem only when it is accepted unknowingly. Companies that deliberately choose lock-in for speed, while planning exit strategies for core components, maintain optionality. Those that drift into lock-in discover constraints when business needs demand flexibility.

Need clarity on your architecture choices? Book a free consultation with Mind Studios to evaluate your serverless strategy and design exit plans before lock-in becomes irreversible.

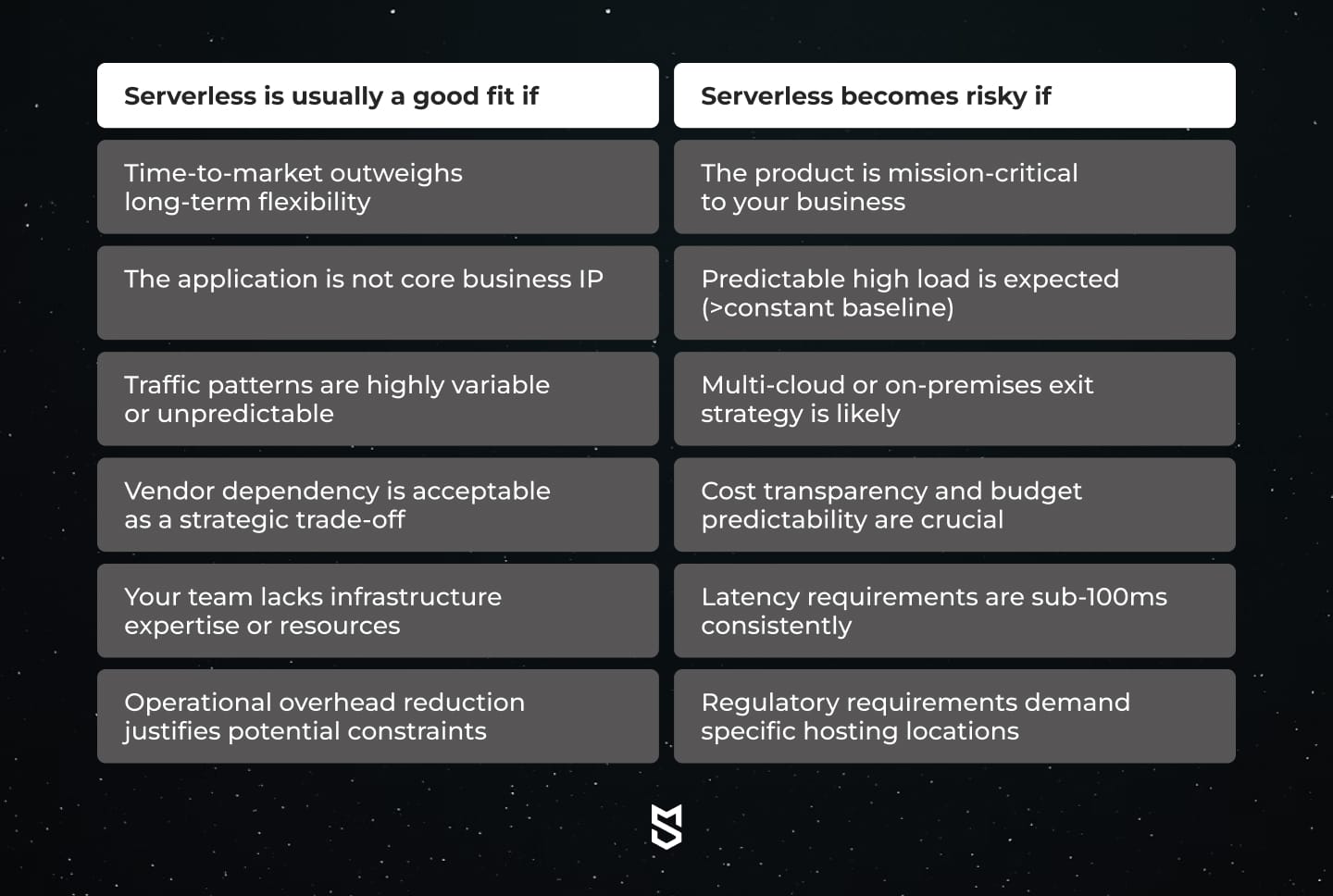

Clear decision criteria: Should you use serverless?

Deciding whether to use a serverless architecture requires evaluating your situation against clear criteria rather than following industry trends. The decision framework below helps make rational choices.

Strategic assessment questions

The best practices for serverless architecture start with an honest assessment. If your application will serve 1,000 concurrent users 24/7, paying per-execution for millions of function calls likely costs more than dedicated infrastructure. Serverless shines with variable load, not constant high throughput.

Consider your product lifecycle:

- An MVP testing market demand can absorb future refactoring costs if the concept succeeds;

- A platform serving enterprise customers requires architectural decisions that survive multi-year contracts and compliance audits.

Data sensitivity adds another dimension. Applications handling healthcare records, financial transactions, or personally identifiable information face stricter requirements. Serverless providers meet compliance certifications, but the shared infrastructure model creates risks that some organizations cannot accept.

Team capability matters

Team capability matters more than most admit. Serverless reduces infrastructure burden but introduces new complexities: distributed tracing, cost optimization, cold start mitigation, and managing hundreds of small functions. Teams without strong observability practices often find serverless systems harder to operate than traditional systems.

Mind Studios’ insight from 50+ architecture consultations

Companies succeed with serverless when they evaluate it as a business decision first, a technical decision second. Ask these questions:

- What happens if our traffic grows 10x in six months? Serverless handles growth automatically, but will costs remain sustainable?

- Can we accept being tightly coupled to AWS/Azure/Google for 3+ years? Lock-in is manageable if acknowledged upfront.

- Does our competitive advantage depend on infrastructure control? Some products require architectural flexibility that serverless constraints.

- Will our team maintain velocity as the function count grows? Serverless reduces some complexities while introducing others.

Answering honestly reveals whether serverless aligns with your strategic priorities. Many companies find hybrid approaches work best: serverless for peripheral systems, dedicated infrastructure for core services.

The framework also illuminates when serverless is actively harmful. Mission-critical systems with predictable load, strict latency requirements, and deep technical complexity rarely benefit from serverless constraints. The operational simplicity you gain costs more than the flexibility you lose.

Embracing serverless architecture works when you understand the trade-offs and accept them deliberately. The decision should optimize for your situation, not cloud provider marketing or industry momentum.

Serverless vs. traditional vs. hybrid: a business comparison

Understanding serverless architecture comparison across deployment models requires looking beyond technical features to business implications. Each approach offers distinct advantages and constraints that impact your product's trajectory.

| Criteria | Serverless | Traditional (VMs/Containers) | Hybrid |

|---|---|---|---|

| Cost predictability | Low (usage-based billing creates variability) | High (fixed monthly costs regardless of usage) | Medium (fixed baseline + variable scale costs) |

| Operational control | Low (vendor manages infrastructure entirely) | High (full control over stack and configuration) | Medium (control where needed, abstraction elsewhere) |

| Scaling economics | Efficient for variable/spiky load, expensive at constant high throughput | Efficient for sustained load, requires capacity planning | Optimized (scale expensive components selectively) |

| Long-term adaptability | Constrained by vendor ecosystem | High (can migrate workloads relatively easily) | High (compartmentalized dependencies reduce lock-in) |

| Migration effort | High (deep vendor integration makes exit costly) | Medium (requires re-provisioning but portable) | Medium (migrate serverless components strategically) |

Variable pricing: brilliant until it becomes a budget crisis

Serverless charges per-execution, making costs proportional to usage. This works brilliantly for applications with sporadic traffic.

However, costs can spiral unpredictably. You can see your serverless bill jump 650% in one month after a feature launched because a retry loop triggers millions of unnecessary function calls. With dedicated infrastructure, the same code would have caused performance degradation rather than a budget crisis.

Traditional infrastructure offers predictability. You pay $500 monthly for a server regardless of whether it handles 1,000 or 1 million requests. The trade-off is paying for idle capacity during low-traffic periods.

Control needs shift as products mature

- Early-stage companies benefit from offloading infrastructure management to cloud providers.

- Growth-stage companies often need control that serverless sacrifices: custom networking configurations, specialized hardware, or specific compliance requirements.

Scaling economics shift based on load patterns. Serverless makes financial sense when traffic varies significantly, handling Black Friday spikes without provisioning year-round capacity. For applications with a consistent baseline load, reserved instances often cost 30–50% less than serverless.

Long-term adaptability becomes critical during major transitions: regulatory changes requiring specific hosting locations, cost optimization demanding alternative providers, or competitive pressures requiring architectural innovation. Serverless constraints make these transitions expensive or impossible.

How to compartmentalize risk and preserve flexibility

Hybrid architecture combines approaches strategically. Core business logic runs on infrastructure you control. Peripheral systems (like background jobs, scheduled tasks, or temporary spikes) use serverless.

Mind Studios deploys hybrid architectures for clients when:

- Core API and database remain on dedicated infrastructure for cost predictability and control;

- Image processing, file transcoding, and email sending use serverless functions for variable demand;

- Internal tools and admin dashboards leverage fully managed services for operational simplicity;

- Data pipelines use serverless for ETL jobs that run on schedules rather than continuously.

This approach preserves flexibility where it matters most. If business needs change, migrating serverless components is manageable because they're compartmentalized.

The serverless architecture comparison reveals no universal winner. The right choice depends on your product stage, growth trajectory, and strategic priorities. What matters is making the decision deliberately rather than inheriting it from cloud provider defaults or industry momentum.

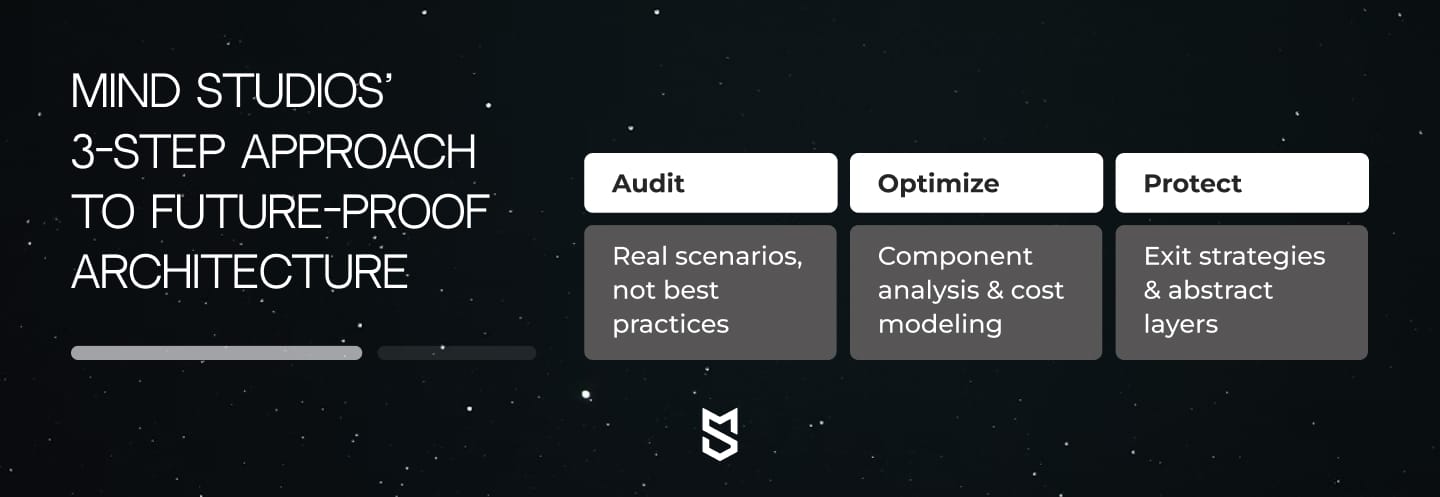

How Mind Studios helps companies avoid architectural dead ends

Architecture decisions made during rapid growth often become constraints later. Mind Studios helps companies design systems that adapt to evolving needs rather than requiring expensive rewrites.

We audit your architecture against real growth scenarios, not best practices

Our approach starts with architecture audits that evaluate current decisions against future scenarios. We examine your serverless implementation, identify tight coupling to vendor services, quantify migration complexity, and project costs at scale.

For a Series A SaaS company, we audited their serverless-first architecture six months post-launch. The system worked well at 500 daily active users, but projected costs at 50,000 users exceeded revenue targets. We designed a hybrid migration path: core API moved to dedicated infrastructure (reducing costs 60%), while background jobs remained serverless (preserving operational simplicity).

We identify which components justify serverless and which drain your budget

One client's architecture used AWS Lambda for everything, including their authentication service that handled constant, predictable load. We moved authentication to containers, cutting that component's costs by 75% while keeping Lambda for truly variable workloads like PDF generation and image processing. Total infrastructure costs dropped 40% without sacrificing scalability.

We design exit strategies before lock-in compounds

Planning exit strategies early matters because vendor lock-in compounds over time. We design architectures with abstraction layers that isolate vendor-specific code, making future migration manageable. This does not mean avoiding serverless, it means containing its scope strategically.

Common patterns we prevent:

- Over-reliance on proprietary services: Using DynamoDB, Cognito, and EventBridge creates three separate lock-in points. We design around commodity protocols where possible.

- Cost surprises at scale: Teams discover their serverless architecture costs 3–5x traditional infrastructure when traffic grows. We model costs across different load scenarios before committing.

- Debugging complexity: 200+ small functions become difficult to trace and optimize. We establish observability strategies before microservice sprawl becomes unmanageable.

Need a development plan tailored to your business requirements? Book a free consultation with Mind Studios to validate your architecture strategy before committing to expensive paths.

Wrapping up

Serverless is a strategic choice, not a default. The technology delivers genuine value (like automatic scaling, reduced operational overhead, faster time-to-market) when applied to problems it solves well.

The biggest risk is choosing serverless without considering the long-term view. Vendor lock-in, cost unpredictability, and architectural constraints matter less at launch than at scale. Companies that optimize for optionality rather than trends maintain flexibility when business needs change.

The smartest teams evaluate serverless through business criteria:

- Does time-to-market justify future refactoring costs?

- Can we accept vendor dependency for this component?

- Will our competitive advantage depend on infrastructure control?

Embracing serverless architecture works when you understand the trade-offs and accept them deliberately. The decision should optimize for your situation, not cloud provider marketing or industry momentum.

- Use serverless for peripheral systems, variable workloads, and rapid prototypes.

- Use dedicated infrastructure for core services, predictable high load, and mission-critical operations.

- Use hybrid approaches that preserve flexibility where it matters most.

If you're evaluating serverless or already feeling its limitations, Mind Studios can help you validate, redesign, or future-proof your architecture before costs spiral. Get in touch with our team to discuss your specific situation.