You'll discover why AI bias is a data problem and how it manifests across fintech, logistics, HR-tech, and PropTech. We'll show you the measurable business damage from biased datasets and how custom datasets and domain-aware models reduce the risk of AI bias for business at the design stage.

Highlights:

- 36% of companies suffered direct negative impacts from AI bias in 2024.

- Models perform 5–10% less accurately for underrepresented populations than majority groups.

- Domain-aware models reduce false negatives for qualified candidates by up to 41%.

AI systems don't usually fail because the algorithms are bad. They fail because the data doesn't reflect reality.

In 2026, AI bias presents a growing problem that can undermine a business’s credibility across fintech, logistics, insurance, HR-tech, and PropTech. Models trained on generic or foreign datasets often behave unpredictably when deployed in new markets, industries, or company sizes. Thus, the risk of AI bias for business is no longer theoretical. It's measurable and costly.

When AI models are built on data that doesn't match your operating environment, they produce wrong recommendations, exclude qualified customers, and damage user trust. According to McKinsey's 2025 research, while 88% of organizations report regular AI use, most haven't scaled these technologies deeply enough to avoid data misalignment problems that create bias.

The question for decision-makers: will your AI system work for the customers, markets, and contexts where you actually operate?

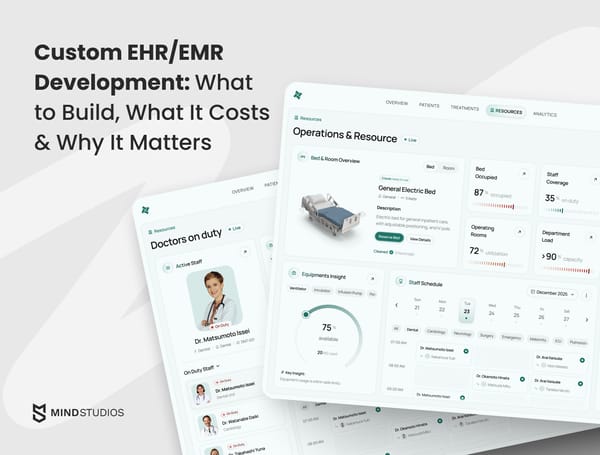

Mind Studios helps companies build AI-based solutions that work in their real-world environment, not just in controlled benchmarks. We audit existing systems for data gaps, design custom datasets aligned with actual operations, and build domain-aware models that account for regional, industry, and scale differences.

Need to evaluate your current AI systems for bias risks? Book a free consultation with our team.

Why AI bias happens by design, not by accident

Most people assume AI bias stems from flawed algorithms or intentional discrimination. The reality is more fundamental: bias is built into the data collection process itself.

We've audited dozens of AI systems, and the pattern is consistent: companies invest heavily in model architecture while barely questioning their training data. They'll spend months optimizing algorithms, but won't spend a week understanding whose reality their data actually represents. That's where bias gets locked in, long before the first line of code runs.

— says Dmytro Dobrytskyi, CEO at Mind Studios.

Why training data creates systematic bias

Popular AI models are trained on datasets assembled for convenience, not accuracy. These datasets over-represent certain demographics, regions, and use cases while under-representing others. When models learn from uneven data, they learn uneven patterns.

The result:

- Systems that perform well on average but fail in specific contexts;

- Strong accuracy metrics across millions of predictions, yet consistent failures for specific segments;

- Models that optimize for the most common patterns while treating everything else as noise.

Consider how bias in AI models hurts your business. A model might show impressive overall performance yet fail consistently for specific customer segments, geographic regions, or business types. This happens because training data rarely reflects the full distribution of real-world scenarios.

Mind Studios' tip: Run disaggregated performance tests before launch. A 95% overall accuracy rate might hide 70% accuracy for specific market segments, revealing bias that aggregate metrics miss.

AI bias: the hidden business risks

The problem compounds when AI moves from making recommendations to making decisions:

| System type | Impact of bias | Business consequence |

|---|---|---|

| Recommendation engine | Occasionally surfaces irrelevant products | User annoyance, minor engagement drop |

| Credit scoring system | Systematically disadvantages certain applicant profiles | Discrimination, regulatory exposure, lost revenue |

| Fraud detection | Higher false positives for specific regions | Customer lockouts, service complaints |

| Hiring automation | Filters qualified candidates from underrepresented groups | Talent loss, diversity setbacks, legal risk |

According to the Stanford AI Index 2025, AI-related incidents are rising sharply, yet standardized responsible AI evaluations remain rare among major industrial model developers.

How data collection methods create bias

Bias is rarely intentional. It's a byproduct of how data is collected, labeled, and reused. Training datasets reflect historical decisions, which often carry embedded assumptions about what's "normal" or "standard."

Common data collection problems:

- Sampling from convenient rather than representative sources;

- Over-weighting historical patterns that reflect past discrimination;

- Missing alternative signals from underrepresented populations;

- Treating majority-market behavior as the universal baseline.

When these assumptions don't transfer to new contexts, the model breaks.

How AI bias manifests across key industries

Recognizing and addressing bias in AI models requires understanding how it appears differently across sectors. The root cause is always a data mismatch, but the patterns of failure vary by industry.

| Industry | Common bias pattern | Typical impact | Why it happens |

|---|---|---|---|

| FinTech | Credit or fraud models fail for specific regions or customer profiles | Qualified applicants are denied credit; high false-positive fraud flags | Training data over-represents major markets and high-credit populations; alternative data sources not incorporated. |

| Logistics | Routing or demand models optimized for major hubs fail in secondary markets | Poor delivery estimates; inefficient route planning; inventory misallocation | Datasets heavily weighted toward high-volume urban corridors; rural and suburban patterns treated as outliers. |

| HR-tech | Screening models reinforce historical hiring patterns | Qualified candidates filtered out; diversity goals undermined | Historical hiring data reflects past biases; models learn to replicate rather than correct these patterns. |

| PropTech | Pricing or risk models misrepresent local markets | Property valuations skewed; investment decisions based on flawed data | Training data dominated by major metros; local market dynamics and neighborhood-level factors underweighted. |

Financial services: accuracy gaps for underrepresented groups

In financial services, Black and Brown borrowers were more than twice as likely to be denied a loan as white borrowers, according to a 2024 Urban Institute analysis.

The issue extends beyond approval rates. Stanford research found that predictive tools are 5–10% less accurate for lower-income families and minority borrowers than for higher-income and non-minority groups, primarily because these populations have thinner credit files and less data available.

Recruitment: amplifying historical bias patterns

In recruitment, the numbers are equally concerning. Nearly all respondents in a Resume Builder survey acknowledged that AI could produce biased outcomes, noting potential for age, gender, socio-economic, and racial bias in automated assessments.

University of Washington research found that when AI systems showed bias in candidate recommendations, human decision-makers mirrored those biases in their selections, amplifying the problem rather than correcting it.

PropTech: regulatory scrutiny on automated valuations

In PropTech, the hidden business risk appears in property valuations and tenant screening. Algorithms can reinforce bias by undervaluing neighborhoods or excluding certain borrower profiles when training data reflects historical market patterns.

The consistent pattern: models trained on majority-market data produce systematically worse outcomes for minority populations, smaller markets, and non-standard cases. These aren't random errors. They're structural problems baked into training data.

Industry-specific bias requires industry-specific solutions. Whether you're in fintech, logistics, HR-tech, or PropTech, we can help you identify where generic models fail and build systems that work for your specific market segments. Contact our tech team for a free consultation.

Mind Studios' insight: Build separate model variants for different market contexts rather than forcing one universal model. Urban routing algorithms, for example, need fundamentally different features than rural logistics models.

Why AI trained in one environment fails in another

Moving an AI model from one context to another is not a scaling problem. It's a context drift problem. Models don't generalize automatically. They carry the assumptions and patterns of their training environment with them.

How context shapes AI performance

| Contrast | Data distribution difference | Common failure mode |

|---|---|---|

| Enterprise vs. SMB | Large companies generate structured data at scale; small businesses have sparse, inconsistent data. | Models trained on enterprise data miss the informal workflows, incomplete records, and resource constraints typical of SMBs. |

| US vs. EU | Regulatory frameworks differ (GDPR vs. sector-specific rules); cultural behaviors vary; business practices diverge. | Compliance assumptions break; user behavior predictions fail; risk profiles don't transfer. |

| Major cities vs. local markets | Urban areas generate dense transaction data; rural/suburban areas have sparser signals and different patterns. | Demand forecasting breaks; pricing models misestimate; supply chain predictions fail. |

3 key mechanisms of context failure

#1: Missing signals in training data

When a model is trained in one environment, it learns which features predict outcomes in that context. Move to a different environment, and critical predictive signals might not even be captured.

Example: A fraud detection model trained on US transactions won't recognize normal patterns in Southeast Asian mobile payments. The data structure is different, the transaction types are different, and the behavioral norms are different.

#2: Misweighted features that dominate decisions

Models often rely heavily on a few strong predictors from their training data. In new contexts, those same features might be misleading.

Example: A credit model that weights homeownership heavily will systematically disadvantage markets where renting is the norm. The model isn't broken. The feature's meaning has changed.

#3: Assumptions baked into datasets that don't transfer

Training data reflects implicit assumptions about what's standard, what's risky, and what's normal. These assumptions rarely hold across contexts.

Example: An HR screening model trained on tech company data will misfire when applied to manufacturing or healthcare roles, where the skill sets, career trajectories, and candidate profiles differ fundamentally.

Mind Studios' recommendation: Before launching in a new market, create a validation dataset from that specific context and test your model on it. If accuracy drops significantly, you need market-specific retraining rather than hoping your existing model will generalize.

The adoption-infrastructure gap

According to SHRM's 2025 data, 43% of organizations used AI for HR tasks in 2025, up from 26% in 2024. But 67% of organizations report ongoing challenges with bias management, requiring continuous monitoring and adjustment.

The key insight: copying a "successful" model into a new context often fails because success is context-dependent. A model that works well for large US enterprises won't automatically work for European SMBs or Asian markets, even if the underlying problem seems similar.

When bad datasets lead to real business damage

The consequences of data-driven bias are not hypothetical. They're measurable in lost revenue, operational inefficiency, and reputational damage.

Incorrect automated decisions that teams rely on

When AI systems make decisions based on biased data, those errors propagate through the organization:

- Sales teams chase leads with high AI scores as high-potential, but they don't convert;

- Operations allocate inventory based on demand forecasts that miss regional patterns;

- Product teams prioritize features for user segments that the model misidentifies;

- Each bad decision compounds the cost.

Discriminatory outcomes that appear unintentionally

In 2025, a federal judge allowed a nationwide collective action under the Age Discrimination in Employment Act against Workday, alleging its AI hiring tools discriminated by age, race, and disability.

The company faced liability not just for direct discrimination but for creating tools that enabled discrimination at scale. Similar cases are emerging across industries as regulators and courts recognize that algorithmic bias carries legal consequences.

UX degradation and loss of user trust

Users notice when AI systems treat them unfairly, even if they can't articulate exactly what's wrong:

- A recommendation engine that never surfaces relevant products;

- A chatbot that misunderstands regional language patterns;

- A pricing algorithm that seems arbitrary.

These experiences erode trust. According to Gartner research, only 26% of applicants trust AI to evaluate them fairly, making visible human oversight and clear explanations essential.

Financial losses, churn, and operational inefficiency

The direct costs add up quickly. A 2024 DataRobot survey found that 62% of companies lost revenue due to AI systems that made biased decisions.

Beyond lost revenue, there's the cost of:

- Manual correction of AI mistakes;

- Customer service complaints and resolution;

- Rebuilding systems after failure;

- Hidden overhead of managing ongoing errors.

An AI deployment that looks cost-effective becomes expensive when you account for the hidden overhead of managing its mistakes.

Regulatory and reputational exposure

Regulators are increasingly scrutinizing AI systems for bias. The EU AI Act classifies credit scoring and property valuation as high-risk AI systems, requiring auditable data sources and transparent model logic.

In the US, the CFPB made clear that "there are no exceptions to the federal consumer financial protection laws for new technologies." In 2024, Apple was fined $25 million and Goldman Sachs $45 million for separate Apple Card failures related to algorithmic transparency issues.

Need an experienced development partner to analyze your AI systems and create a bias mitigation plan? Book a free consultation with our experts.

Why off-the-shelf AI is rarely enough

Pre-trained models and vendor solutions offer quick deployment and proven capabilities. For many use cases, they're a good starting point. But they come with structural limitations that matter when AI decisions affect revenue or user access.

Pre-trained models optimize for generality, not specificity

Foundation models are trained on enormous datasets to capture broad patterns. This makes them useful across many contexts but not optimal for any specific one.

What this means in practice:

- A general-purpose language model understands common patterns but misses industry jargon, regional dialects, and domain-specific terminology;

- It will work adequately for generic tasks but underperform on specialized ones;

- Broad capabilities come at the cost of deep accuracy in specific domains.

Fine-tuning has limits without dataset control

Fine-tuning a pre-trained model on your data can improve performance, but you're still constrained by the base model's architecture and initial training.

The constraints:

- If the foundation model learned patterns that don't apply to your context, fine-tuning might not fix them;

- You can adjust weights, but you can't change what the model fundamentally learned about the world;

- The base model's biases often persist despite surface-level customization.

Lack of transparency into vendor training data

Most commercial AI vendors don't disclose their training data sources, collection methods, or data distribution.

What you don't know:

- Which populations are over- or under-represented;

- What assumptions are baked into the model;

- Whether the model's biases will affect your specific use case.

According to AI in Financial Services 2025 research, the pace of AI innovation is increasingly outstripping regulatory capacity, making transparency even more critical.

When to recognize vendor solution limits

Off-the-shelf AI is a starting point, not a finished solution. For applications where accuracy matters across diverse user populations and contexts, custom datasets and domain-aware models are often unavoidable.

How custom datasets and domain-aware models reduce bias

Bias mitigation is not a one-time fix. It's an ongoing process built into how you design, train, and maintain AI systems.

Targeted data collection aligned with real usage

Custom datasets start with understanding your actual operating environment:

- What user populations do you serve?

- What geographic markets do you operate in?

- What edge cases appear regularly in your business?

Collect data that represents these scenarios proportionally, not just what's easy to gather. If you serve both enterprise and SMB customers, your training data should reflect both. If you operate across regions, capture regional differences in behavior, language, and context.

Domain-specific feature selection

Generic models use features that work on average across many domains. Domain-aware models use features that matter in your specific context.

| Context | Generic features | Domain-specific features |

|---|---|---|

| Mobile payments (Southeast Asia) | Transaction amount, frequency | Mobile wallet provider, peer-to-peer patterns, QR code usage |

| US credit cards | Card type, merchant category | Credit bureau scores, account history depth |

| Manufacturing recruitment | Education level, years of experience | Certification types, equipment operation skills, shift flexibility |

| Tech recruitment | Education level, years of experience | GitHub activity, technical stack diversity, open-source contributions |

A fraud detection system for mobile payments in Southeast Asia needs different features than one built for US credit cards. The transaction types differ, the behavioral patterns differ, and the risk indicators differ. Feature engineering based on domain knowledge often matters more than model complexity.

Validation in production environments

Lab testing on clean, balanced datasets doesn't predict real-world performance. Validate models on actual production data, including the messy, incomplete, and edge-case scenarios you'll encounter daily.

What to validate:

- Performance across user segments;

- Accuracy in different geographies;

- Consistency across transaction types;

- Behavior on incomplete or noisy data.

Use this feedback to identify where the model breaks and what data gaps need filling.

Continuous feedback and retraining loops

User behavior changes, markets shift, and new patterns emerge. Static models degrade over time as the world they were trained on diverges from current reality.

Build systems that:

- Capture feedback automatically;

- Detect performance drift in real time;

- Trigger retraining when accuracy drops below thresholds;

- Incorporate new data without manual intervention.

It's how you keep models aligned with reality.

Ready to build AI systems designed for bias resistance from day one? Let's discuss your data collection strategy, validation approach, and continuous monitoring needs for your specific industry and use case.

How Mind Studios helps make profitable business decisions

We approach AI development differently from most vendors. Instead of promoting ready-made solutions, we help companies build systems that work in their actual operating environment.

Auditing existing AI systems for hidden bias and data gaps

Our audits start with understanding what your AI system is supposed to do versus what it actually does:

- Map data flows and examine training datasets;

- Test model performance across different user segments and contexts;

- Look for performance disparities that indicate bias;

- Identify which populations are underrepresented in training data;

- Find where assumptions baked into models don't match your reality.

Designing custom datasets aligned with business reality

Dataset design starts with operational requirements, not technical convenience. We work with your teams to understand the full range of scenarios your AI will encounter: different customer types, market conditions, edge cases, and failure modes. We design data collection strategies that capture this diversity proportionally.

Building or adapting domain-aware models

Domain-aware models optimize for accuracy in specific contexts rather than average performance:

- Select features based on domain knowledge, not just statistical correlation;

- Validate on realistic test sets that include edge cases and minority segments;

- Design models that explain decisions in domain-relevant terms.

Monitoring and retraining systems over time

AI systems degrade as the world changes. We build monitoring infrastructure that tracks performance continuously:

- Set up automated alerts for accuracy drops;

- Monitor performance disparities across segments;

- Detect data drift that indicates training data is becoming stale;

- Design retraining workflows that incorporate new data regularly.

The key insight from our work: bias isn't primarily a technical problem. It's a data problem. Companies that invest in understanding their data before they invest in model complexity get better results.

Conclusion

AI bias is not just a moral concern. It's a business risk multiplier.

Companies that deploy AI systems without understanding their data limitations risk building products that fail users, exclude qualified customers, and expose themselves to regulatory action.

The root cause is consistent: models trained on generic, convenience-sampled, or majority-market data produce systematically worse outcomes for:

- Minority populations

- Smaller markets

- Non-standard cases

These are structural problems baked into how AI is developed and deployed.

Off-the-shelf AI can be a starting point, but for applications where decisions affect people, money, or access, it's rarely sufficient. The companies succeeding with AI in 2026 are those that treat data quality and domain alignment as strategic priorities, not afterthoughts.

If your AI needs to work across real markets and real users, Mind Studios can help you audit, adapt, or rebuild it the right way. We focus on understanding your operating environment first, then building AI systems that work in that reality, not just in controlled benchmarks. Contact our tech team for a free consultation.